TL;DR

The coverage gap. Agents act across shell commands, direct API calls, database writes, and file system operations. None of this touches the gateway. Routing all of it through a proxy is architecturally unworkable. k

The endpoint blind spot. Coding agents on developer machines (Cursor, Claude Code, Copilot, Codex) typically connect to local MCP servers via stdio, a local transport with no network hop. Built-in MCP connections in tools like Claude Code cannot be intercepted by a gateway at all. Covering remote connections means deploying and enforcing gateway infrastructure on every developer machine.

The context gap. Gateways evaluate each request in isolation. They cannot distinguish a legitimate cleanup task from a compromised agent executing a data destruction sequence. The same call, the same credentials, the same “allowed” verdict.

MCP itself is deeply insecure and potentially losing relevance. Tool poisoning attacks succeed at a 72.8% rate in peer-reviewed benchmarks, and MCP’s position as the dominant agent protocol is already being questioned. Firms are also already reporting a move from the protocol altogether.

System Hardening and Preventative Controls Are More Vulnerable Than Ever

It's been a rough few weeks for anyone betting on static security controls. In March, the TeamPCP campaign compromised LiteLLM, the most popular LLM proxy, installing credential-stealing code that executes on every Python startup. In April, Anthropic announced a model it won't release because, according to their red team, it finds and exploits zero-days fully autonomously. One attacked the infrastructure gateways are built on. The other made the static rules they enforce look quaint.

This article examines one of the main control categories we see organizations relying on for agent security, MCP and LLM gateways, and why we think the approach misses the nature of how agents actually operate.

What Gateways Actually Do

There are two types. LLM gateways (Portkey, LiteLLM, and similar) sit between your application and the model API. They handle model routing, cost tracking, rate limiting, and in some cases prompt and response filtering. MCP gateways sit between agents and MCP servers. They enforce tool-call policy, inject credentials, and apply allow/deny rules to which tools an agent can access.

Both deliver genuine value. Centralized API key management means agents are not storing secrets in plaintext config files. Traffic logging helps to give you an inventory of which MCP servers your agents talk to. Basic guardrails mean you can scope which tools are available to which agents. These are real capabilities for IT and platform engineering teams working to impose hygiene on agent infrastructure.

The problem starts when organizations treat these capabilities as synonymous with security.

What MCP Gateways Miss: Agent Activity Outside the Protocol

An inline proxy only governs traffic that flows through it. For AI agents, large classes of activity never do.

Unsanctioned agents are the first gap. The organization’s standard may be Claude Code, but a developer who prefers Codex installs it without security involvement. Teams spin up agents in CI/CD pipelines, in notebooks, through SaaS platforms. None of these are configured to route through a corporate gateway. In many cases, the people who built them do not know the gateway exists. Organizations running their first agent visibility scans are consistently finding agents they did not know about.

Non-MCP actions are the second, and arguably larger, gap. Production agents do not stay neatly inside one protocol. An agent might read data through MCP, process it with a Python script via shell execution, write results directly to a Postgres database, and post a summary to Slack through the platform’s native SDK. The gateway saw step one. Steps two through four were invisible. Shell access is often the most dangerous capability an agent has, and the gateway has nothing to say about it.

The Endpoint Blind Spot: Where Gateways Struggle Miserably

The transport does not cooperate. Cursor, Claude Code, Copilot, and Codex connect to MCP servers primarily via stdio: local process-to-process communication with no network hop. A cloud-hosted MCP gateway physically cannot intercept a stdio connection between two processes on a developer’s laptop. Most MCP servers are designed to be pulled from a repository and run locally. Companies rarely host managed MCP servers for their employees.

Even remote connections require per-machine deployment. When endpoint agents do use remote MCP servers over HTTP, the gateway still needs to sit on each developer’s machine to intercept the traffic. That means deploying, configuring, and maintaining proxy infrastructure across the entire fleet. This is significant operational overhead, and it introduces a single point of failure for every machine it runs on.

The reliability model breaks either way. A centralized gateway is a single point of failure: if it goes down, agent traffic either stops entirely or flows without any controls at all. Distributing the gateway to every developer machine avoids that, but replaces it with hundreds of individual points of failure that security teams have to deploy, monitor, and maintain. Neither model scales cleanly.

Enforcement is a configuration nightmare. Claude Code alone has four layers of configuration scopes. Cursor stores MCP configs in dotfiles. API keys sit in plaintext on disk. Even if you deploy a gateway, enforcing consistent policy across multiple config layers per agent, per tool, per developer machine is operationally painful. The gateway’s credential injection value proposition assumes it brokers the secrets. On endpoints, the secrets are already on the machine.

Some connections cannot be intercepted at all. Built-in MCP servers in tools like Claude Code use internal transport mechanisms that a gateway has no way to sit between. You close the door to those entirely.

The MCP servers are unmanaged. Enterprise MCP gateway deployments assume known, vetted, managed servers. On developer endpoints, agents connect to community-published MCP servers from GitHub, npm, and other registries. No vetting process, no security review, no central registry. Research from the MCPTox benchmark found that 5.5% of publicly available MCP servers already contain poisoned tool descriptions. Those are the servers endpoint agents connect to.

The richest attack surface is local. Shell access, file system operations, code execution. On top of that, developers typically have local access to Kubernetes, GitHub, Vault, Bitwarden, and other sensitive infrastructure. Security researcher Johann Rehberger demonstrated a prompt-based command and control system where compromised agents maintain persistent access through heartbeat files, config writes, and memory files, all local file system operations that never touch any gateway.

On endpoints, the agent, the MCP servers, the credentials, the dangerous capabilities, and the persistence surfaces are all on the same machine. The gateway is in a data center somewhere, governing a connection that may not even exist. And if it is deployed locally, it becomes a single point of failure: one misconfiguration, one crash, one bypass, and the entire machine is ungoverned.

Knowing that agent processes exist on developer machines is useful for governance. You can count them. But counting agents is not the same as seeing what they do. The only way to get endpoint visibility is to build something that runs on the endpoint and understands agents. That is a fundamentally different architecture from a network proxy.

Gateways See Calls, Not Context: Policy Without Understanding

Set aside the traffic the gateway never sees. Even for the traffic that does flow through it, the quality of the security decisions a gateway can make is constrained by what it knows at decision time.

Gateways evaluate each tool call in isolation. They see a request payload, check it against policy rules, and forward or block. What they cannot do is reason about the broader context of an agent’s session: what instructions the agent was given, what actions it has taken so far, how this particular request fits into the sequence of everything else the agent has done.

An agent deleting temporary files as part of a cleanup task is routine. That same agent deleting files after being hijacked via indirect prompt injection, as part of a data destruction sequence it was never supposed to execute, is an attack. To the gateway, both look identical: delete file X, called by agent Y, using authorized credentials. Allowed.

Anthropic’s submission to NIST’s RFI on agentic AI security identifies this as an entirely new risk class: a well-functioning, non-compromised agent that operates inside its granted permissions but takes harmful actions for reasons unrelated to compromise or misuse. An agent that misinterprets an instruction, discovers an unintended path, or combines information in ways the user never authorized. Static policy has no answer for this. The call was allowed. The session was compromised. The gateway worked exactly as designed.

The Indirect Prompt Injection Arena, a large-scale competition where 464 participants attacked 13 frontier models with 272,000 prompts, confirmed that tool-use contexts are the most vulnerable setting for prompt injection, with a 4.82% attack success rate. The attacks were concealment-aware: they had to both execute the harmful action and hide the compromise from the user. A gateway monitoring tool calls would see authorized requests returning clean responses while the session was actively compromised underneath.

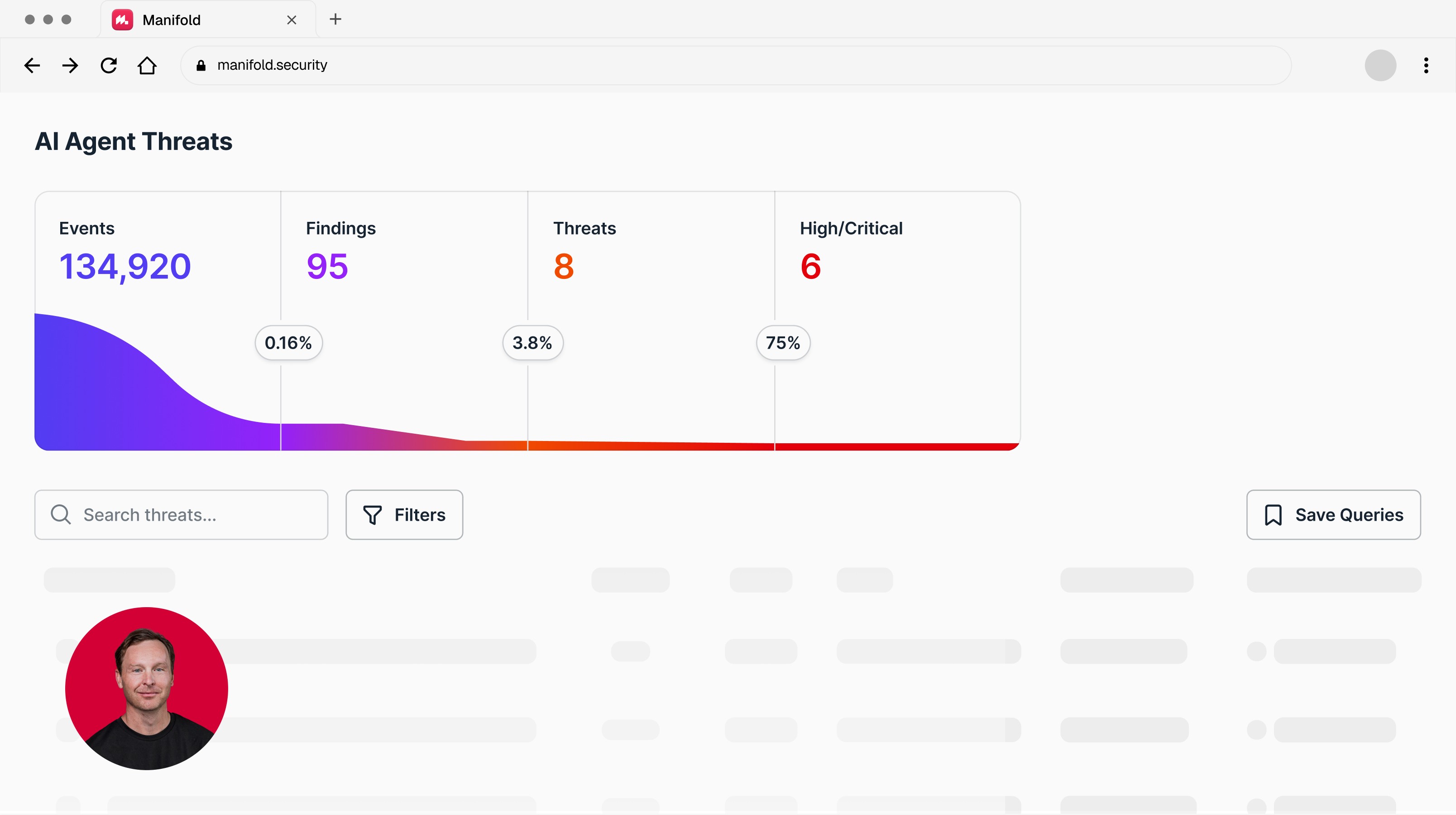

Want visibility into what your coding agents are actually doing at runtime? Talk to Manifold.

Gateways Are Built on Shaky Ground: MCP Is Insecure and Its Dominance Is Uncertain

The arguments above apply to any gateway architecture. This section addresses two problems specific to building security on a protocol chokepoint.

MCP itself is deeply insecure. The MCPTox benchmark, published at AAAI 2026, tested 20 prominent LLM agents against tool poisoning attacks using 45 real-world MCP servers and 353 tools. The results: o1-mini showed a 72.8% attack success rate. More capable models were often more vulnerable, because the attack exploits their superior instruction-following abilities. Agents rarely refused these attacks; the highest refusal rate (Claude 3.7 Sonnet) was under 3%. Separately, the TIP framework achieved a 95% attack success rate using tree-based adaptive search to craft stealthy injection payloads.

The supply chain risk is not theoretical. The TeamPCP campaign in March 2026 hit three targets in five days: Trivy (100 million Docker Hub downloads), Checkmarx KICS, and LiteLLM, the most widely used LLM proxy. LiteLLM’s compromised PyPI package installed a file that executes on every Python startup, exfiltrating every credential on the machine. The infrastructure that gateways are built to govern is itself a target.

We also do not know if MCP is here to stay. At the Ask 2026 conference, Perplexity CTO Denis Yarats announced the company is moving away from MCP internally, citing context window overhead and authentication friction. Cloudflare built code-generation alternatives. The pattern is spreading across production deployments: when teams hit scale requirements for uptime, latency, and cost, they reach for APIs and CLIs over protocol abstractions. If agents increasingly call APIs directly, a security architecture built on an MCP gateway secures a shrinking share of agent traffic. Building your security strategy on a protocol whose dominance is uncertain is a compounding risk.

What Agent Security Actually Requires

Agent security starts with visibility. Before you can set effective policy, you need to understand what your agents are actually doing. Most organizations have this backwards: they start with static rules and treat observability as a later investment.

SACR's Runtime Security for AI Agents report identifies a three-layer model for securing agents at runtime: deterministic governance (static policy), non-deterministic behavioral analysis (continuous observability and baseline tracking), and non-deterministic governance (intent-based authorization and dynamic escalation). Their finding is clear: deterministic governance alone is table stakes but insufficient. The behavioral analysis layer provides the visibility and real-time data that make everything above it possible. Without it, the other layers have nothing to work with.

Runtime observability and behavioral analysis is the foundation. This shifts the question from “does this agent have permission?” to “does what this agent is doing make sense?” That requires agent-aware behavioral telemetry: what actions the agent took, in what sequence, compared against its baseline and its stated objective. You cannot govern what you cannot see. Start here.

Static policy enforcement has a role. Allow/deny rules, least-privilege scoping, credential management, tool access controls. Gateways can live in this layer. The limitation is that static policies govern a system that does not behave statically. An agent can operate entirely within the boundaries you set and still cause damage because the permissions were correct but the behavior was not. There is also an operational reality: managing and maintaining static policies across a growing fleet of agents, tools, and config layers is demanding, and few security teams have the staffing to keep up.

Dynamic governance and active response closes the loop. When behavior deviates, you need mechanisms that can tighten permissions in real time, route to human review, or kill the session. The response surface extends beyond the proxy: revoking credentials, quarantining endpoints, triggering incident workflows.

The gap between observability and static policy is where attacks succeed. A gateway returning “allowed” while an agent systematically exfiltrates customer records, one authorized query at a time, is not a misconfiguration. It is a static control doing exactly what it was designed to do, in the absence of the behavioral detection that should have caught the pattern.

Want to get to grips with what your agents are actually doing at runtime? That's what Manifold is built for. Let’s talk.

Latest articles

RESEARCH

The Gateway Gap: Why AI Agent Security Needs More Than a Chokepoint

Apr 16, 2026

Oleksandr Yaremchuk

CTO & Co-Founder

Nate Demuth

Chief Architect

RESEARCH

Two Git Commands Fooled Claude Into Merging Malicious Code

Apr 15, 2026

Ax Sharma

Head of Research

Oleksandr Yaremchuk

CTO & Co-Founder

PRODUCT

Introducing Manifest: Supply Chain Intelligence for the AI Agent Ecosystem

Apr 14, 2026

Oleksandr Yaremchuk

CTO & Co-Founder

Neal Swaelens

CEO & Co-Founder