TL;DR

Skills, plugins, MCP servers, extensions. AI agents now have a software supply chain. It's growing faster than the security around it.

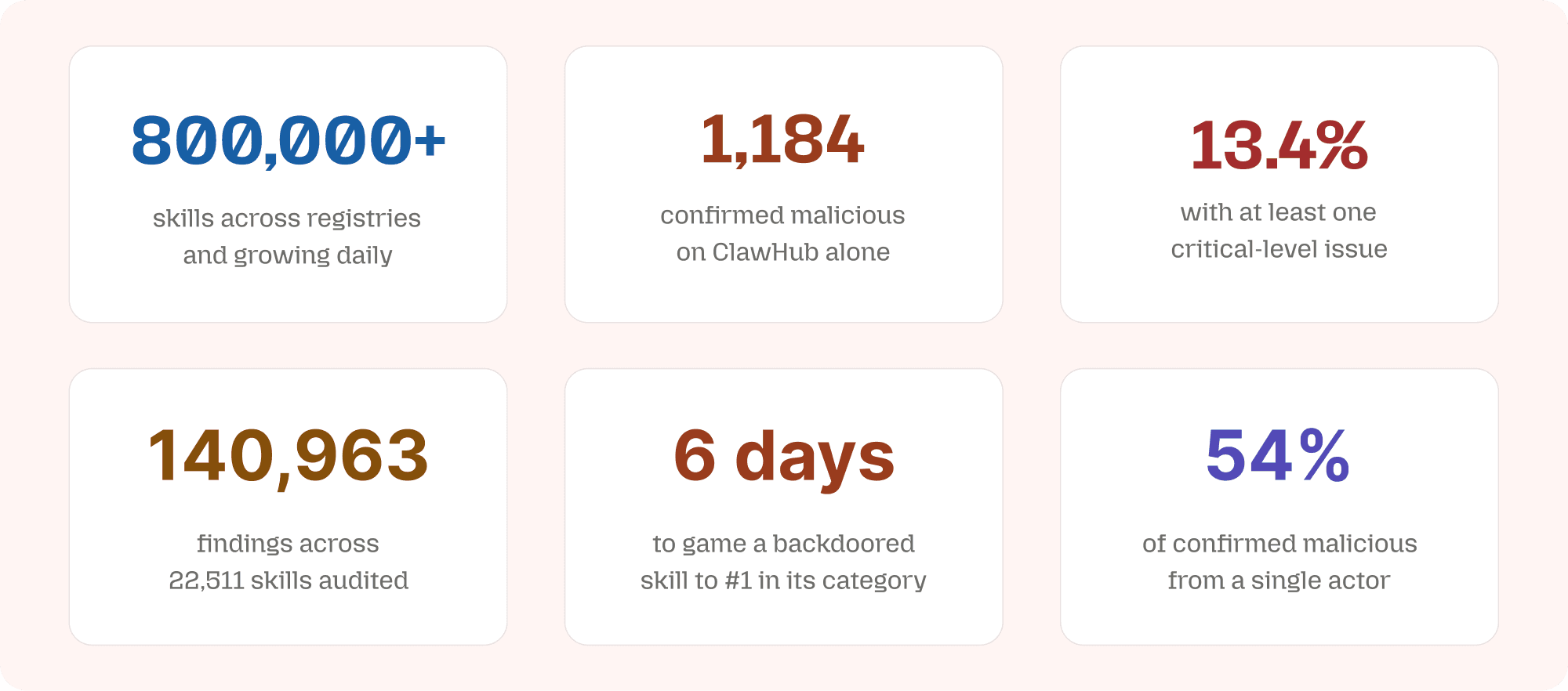

800,000+ skills listed across registries and growing by hundreds daily. 1,184 confirmed malicious on ClawHub alone. A backdoored skill was gamed to #1 in its category within days.

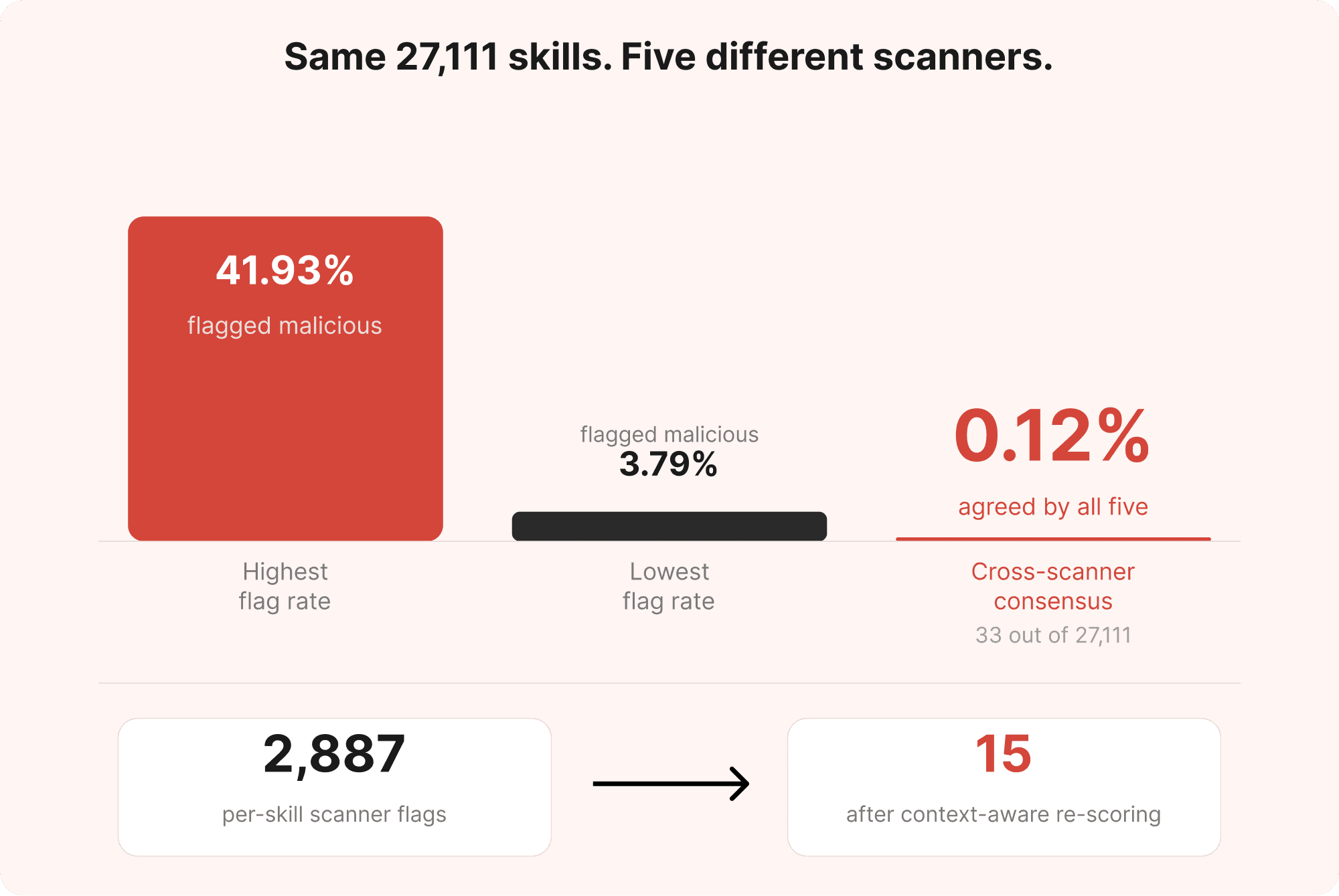

Skills scanners all disagree. One flags 42% as malicious. Another flags 4%. Cross-scanner consensus: 0.12%.

Scanning a SKILL.md in isolation produces noise. Context is everything: who published it, what it calls, who else is publishing the same code under different names.

Manifest is our fix. Free, open-access, graph-based supply chain intelligence. Execution Graphs map what a skill does. Environment Graphs map where it sits in the ecosystem.

Live now at https://manifest.manifold.security

The Supply Chain Problem

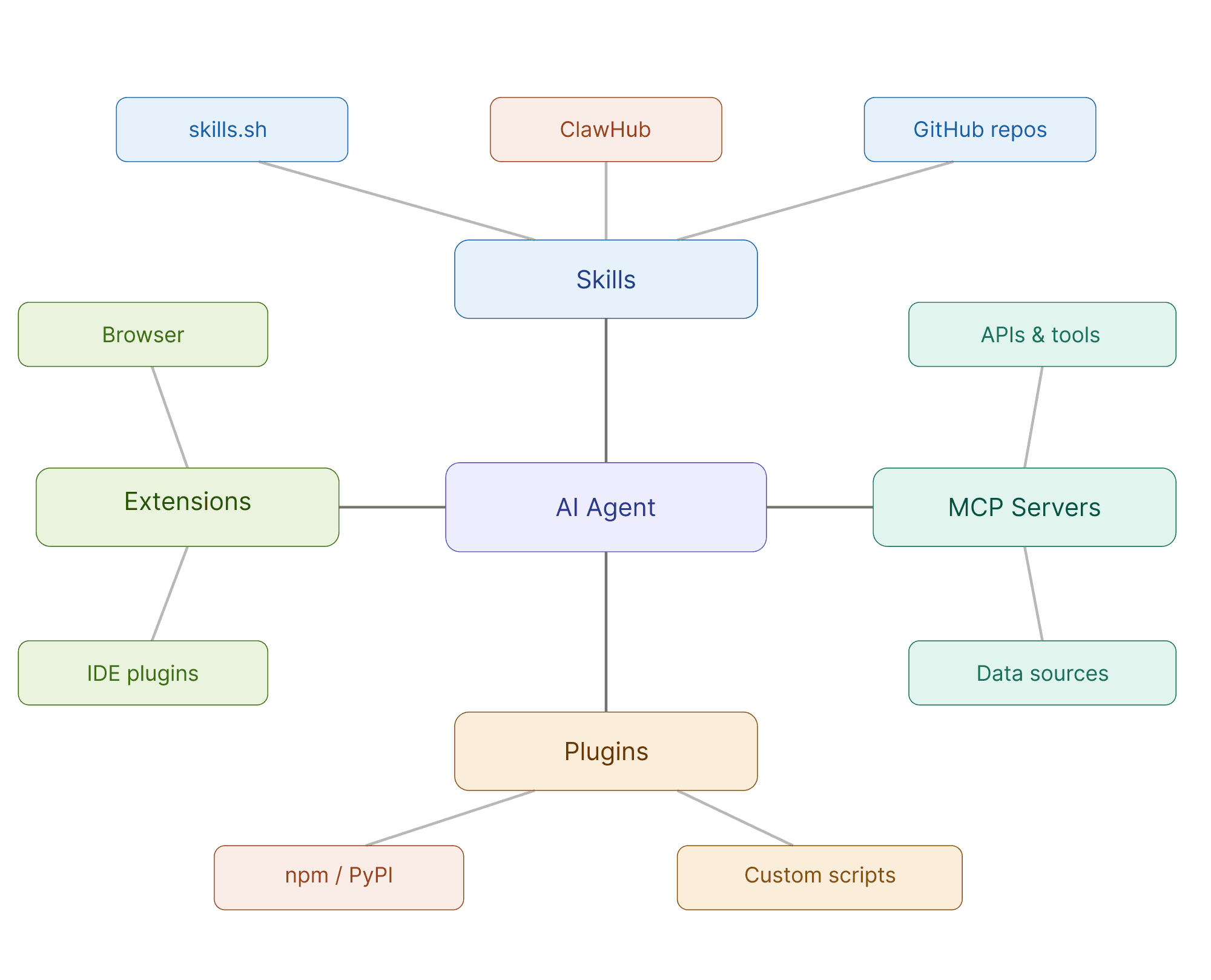

AI agents don't operate in isolation. A coding agent on a developer's workstation is augmented by an expanding ecosystem of third-party components: skills that define how it approaches tasks, plugins that extend its capabilities, MCP servers that connect it to external tools and data sources, and browser extensions that shape its environment. Each component carries its own trust profile, its own author, its own dependency chain.

This is the new software supply chain. And it's exploding.

The SKILL.md standard, introduced by Anthropic in 2025, created a portable format for agent instructions that works across Claude Code, Cursor, Copilot, Windsurf, Codex, and dozens of other agents. Registries followed. Skills.sh has recorded over 8 million skill installations across 83,000+ listed skills. SkillsMP, the largest aggregator, has indexed over 695,000. ClawHub, the marketplace for the OpenClaw agent framework, scaled from under 3,000 skills to over 13,700 in weeks. Combined, the ecosystem now exceeds 800,000 listed skills across registries, and the growth curve is near-vertical, with tens of thousands added weekly.

The pattern should look familiar to anyone who lived through the early days of npm or PyPI. Open registries. Minimal vetting. Explosive growth. Attackers following the developers.

But there's a critical difference. When a malicious npm package runs, it threatens build pipelines. When a malicious agent skill runs, it operates with the agent's full permissions: file system access, shell execution, API credentials, network connectivity. The blast radius is the developer's entire environment.

Skills Under Attack

Skills have become the fastest-growing and most actively targeted segment of the AI agent supply chain.

The numbers tell the story. One of the largest empirical studies of the skill ecosystem at the time (Liu et al., February 2026) analyzed 98,380 skills across two major registries and behaviorally confirmed 157 as malicious. Those weren't edge cases. Malicious skills averaged 4.03 vulnerabilities each across a median of three kill chain phases. The researchers identified two distinct archetypes: Data Thieves that exfiltrate credentials through supply chain techniques, and Agent Hijackers that subvert agent decision-making through instruction manipulation. A single actor accounted for 54% of confirmed cases through templated brand impersonation.

On ClawHub, the OpenClaw marketplace, Antiy CERT confirmed 1,184 malicious skills, roughly one in twelve packages in the ecosystem. The registry had scaled from under 3,000 to over 13,700 skills in a matter of weeks. A separate study of nearly 4,000 skills across ClawHub and Skills.sh found that 13.4% contained at least one critical-level issue, including malware, prompt injection, and exposed secrets. A broader audit of 22,511 skills across four registries produced 140,963 security findings.

And it's not just volume. In March 2026, Silverfort researchers demonstrated a ranking-manipulation attack on ClawHub. By exploiting an unprotected API endpoint, they inflated a malicious skill's download count and pushed it to #1 in its category. In six days, the skill was executed approximately 3,900 times across more than 50 cities, including inside several public companies. Each execution quietly exfiltrated identity data.

The attack surface isn't theoretical. The industry noticed. Skill scanners appeared.

The Scanner Problem

Within weeks, the market was flooded with skill-scanning tools. LLM-based classifiers. Static analyzers. Signature-matching engines. Behavioral sandboxes. Community auditors. Some open source, some commercial, some stitched together over a weekend.

They all share one assumption: analyze the skill in isolation and render a verdict.

The problem is that they disagree with each other. Badly.

Holzbauer et al. tested five different scanners on the same corpus of 238,180 skills across four registries. Fail rates ranged from 3.79% to 41.93%. A tenfold spread on identical data. Out of 27,111 skills evaluated by all five scanners, only 33 were flagged as malicious by every one of them. That's 0.12% consensus.

When the researchers applied context-aware re-scoring, incorporating repository metadata, author reputation, and code-to-description alignment, 2,887 scanner-flagged skills collapsed to just 15 that remained suspicious. From thousands of alerts to fifteen. That's the signal-to-noise ratio the industry is working with.

A separate audit found that the leading scanner missed 91% of skills that behavioral analysis classified as dangerous. The attacks those scanners missed weren't hiding in obfuscated code. They were written in plain English, embedded in SKILL.md instructions that no signature database would catch.

For security teams, this creates a familiar bind: either drown in false positives or miss the threats that matter. Neither outcome is acceptable when the skill ecosystem is growing by hundreds of new entries per day.

Why Context Changes Everything

A SKILL.md file is a set of instructions. Read in isolation, plenty of legitimate skills look suspicious. A deployment skill needs network access. A database skill handles credentials. A security auditing skill reads sensitive files by design.

Analyzing these without context guarantees false positives at scale. It also guarantees false negatives, because the most dangerous skills don't look dangerous in isolation. They look dangerous in combination.

Consider the patterns that academic research has now documented:

Author clustering. A single actor publishing dozens of skills under different names, or multiple unrelated authors publishing clones of the same skill content across registries. Both patterns are invisible when skills are analyzed individually. Graph analysis surfaces them by connecting what per-skill scanners treat as separate, unrelated entries.

Repository hijacking. When a skill author abandons or renames their GitHub account, the old username becomes available. An attacker who claims it inherits every download link pointing to the original repository. Holzbauer et al. found 121 skills forwarding to seven hijackable repositories, the most-downloaded of which had 2,032 installs.

Rug pull timing. A skill ships clean, earns trust, builds install count, then updates with malicious code. Point-in-time scanning at publish catches the first version. It misses the second.

Dependency chains. A skill that looks benign may call another skill, fetch from a remote endpoint, or depend on an MCP server with its own trust profile. The execution chain matters. The individual node doesn't tell you enough.

These are supply chain patterns, not code quality issues. They require graph-level analysis, not file-level scanning.

Manifest

We built Manifest because we got tired of the noise.

Manifest is a free, open-access supply chain intelligence tool for the AI agent ecosystem. It's available now at manifold.security/manifest.

It covers skills and plugins found on mainstream registries and GitHub. We scan, analyze, and index them so you don't have to trust a single scanner's verdict in isolation.

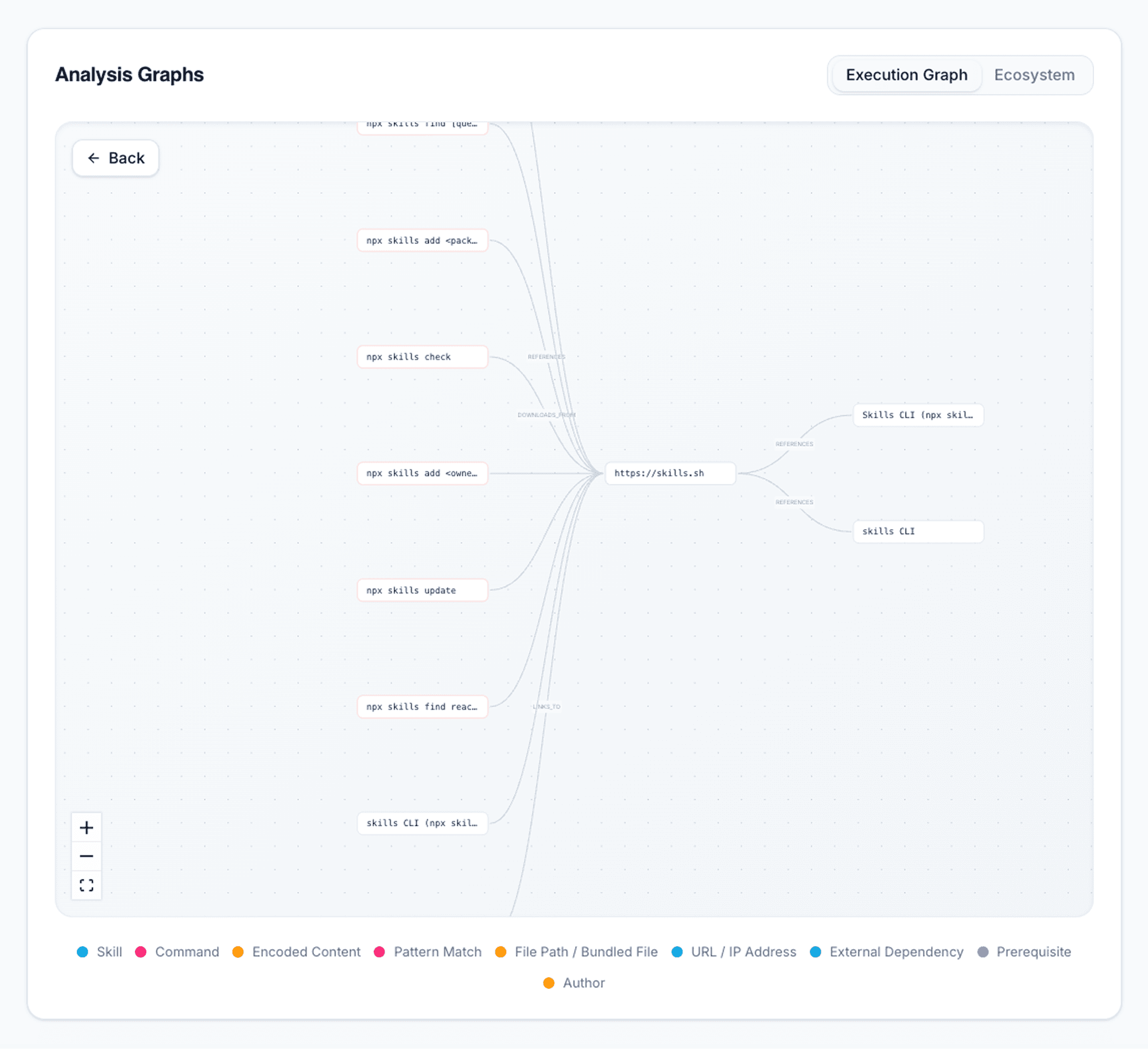

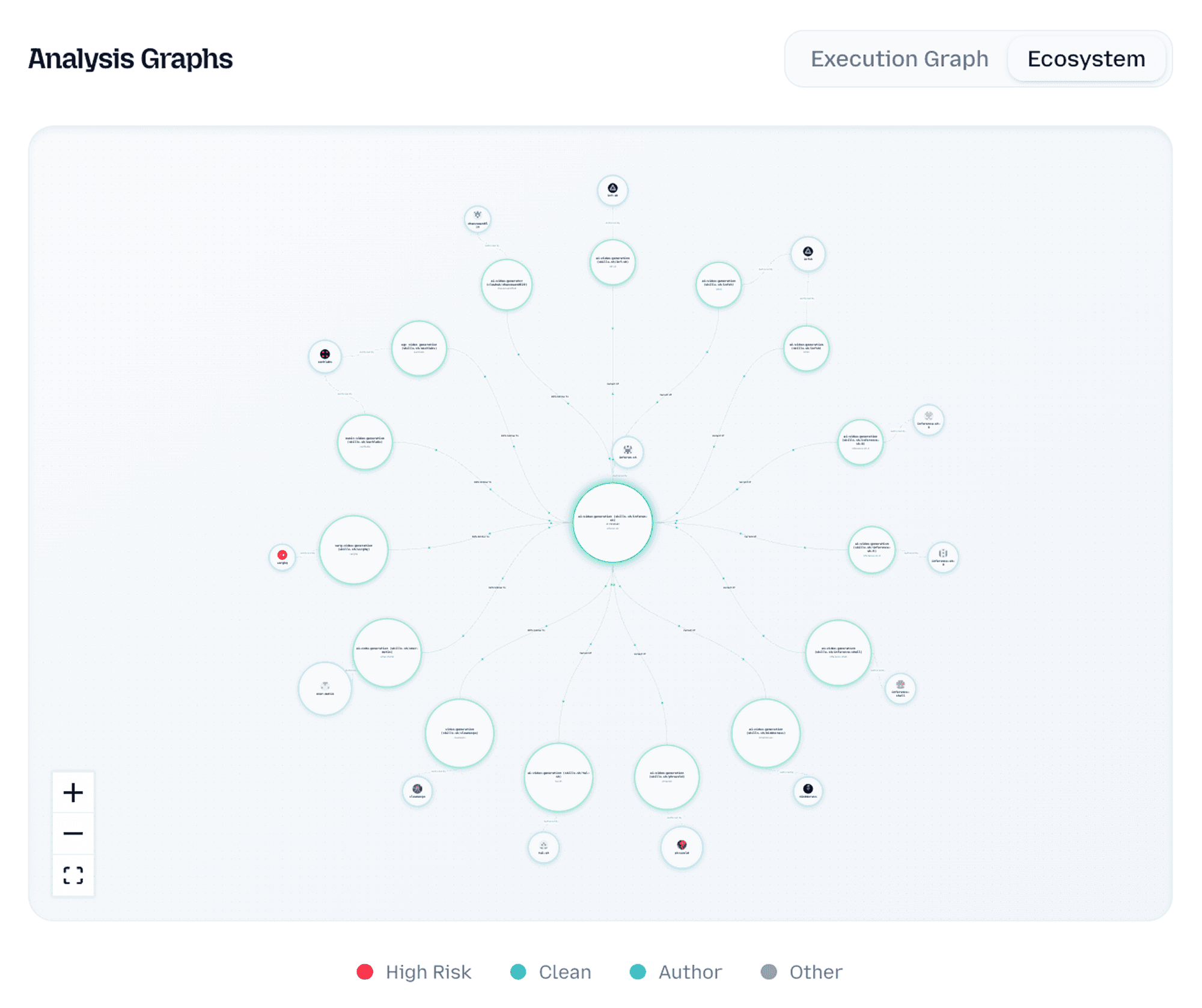

The core differentiator is context. Manifest builds two graphs around every skill:

Execution Graph. What the skill itself does. What it calls, what it depends on, what infrastructure it touches. This is introspection of the skill's actual behavior chain, not just its SKILL.md description.

Ecosystem Graph. The surroundings. Who published this skill. What else they've published. How similar this skill is to others by name or content. Whether the same code appears under different names across registries. Whether the author's repository has changed hands.

Graph theory turns out to be a powerful tool for uncovering what per-skill analysis misses. When a few actors are pushing and promoting dozens of skills under different names but with the same content, even across registries, the environment graph surfaces it. When a skill's execution chain touches infrastructure that other flagged skills also touch, the execution graph connects the dots.

The result: higher signal, fewer false positives, and the kind of ecosystem-level visibility that individual scanners structurally cannot provide.

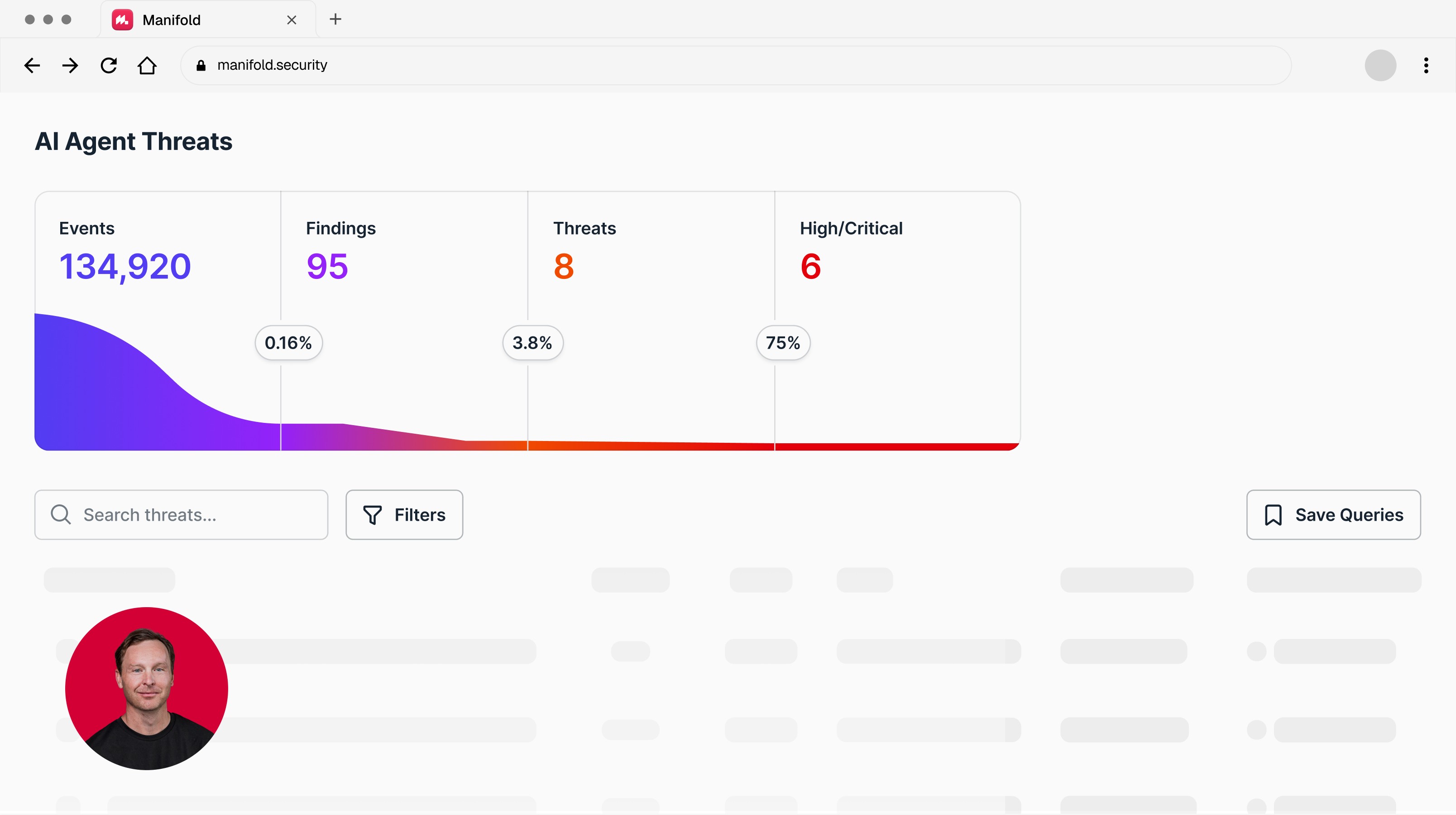

Manifest also powers supply chain intelligence within Manifold's core platform. As Manifold discovers agents and their connected assets across your environment, Manifest's verdicts enrich that inventory with provenance, risk scoring, and graph context for every skill and plugin your agents load.

What's Available

Open access (free):

Full intelligence index of skills and plugins

Execution Graph and Environment Graph for every indexed asset

Security verdict for each skill, informed by graph context

Search and browse across the verified supply chain database

Request review of any skill, extension, or MCP server not yet indexed

As of today, Manifest has scanned 139,617 assets from 21,797 unique owners across multiple registries including skills.sh, ClawHub, and GitHub. Of those, 138,322 are clean. 1,295 are high risk.

Enterprise access:

Intelligence on browser extensions and MCP servers is available to Manifold enterprise customers. This data feeds directly into Manifold's core platform, surfacing supply chain threats and risks within your organization's agent mesh.

What's Next for Manifest

Manifest is the foundation. The intelligence index will expand as the ecosystem grows. Community contributions through the review request feature will accelerate coverage. And as we index more of the supply chain, the graph gets denser and the signal gets stronger.

We'll be publishing detailed research findings from the Manifest dataset in the weeks ahead. The patterns we're seeing in author clustering, cross-registry duplication, and dependency chain risk are worth a deeper look.

For now: go browse https://manifest.manifold.security Search for skills your team uses. See what the graphs reveal. And if you find something we haven't indexed yet, request a review.

Want to see what your coding agents are actually doing, and the detection and response to secure your agentic operations? That's what Manifold is built for. Let’s talk

Latest articles