TL;DR

LLM classifiers (also called AI guardrails or AI firewalls) scan prompts and model outputs for dangerous patterns. First-gen AI security was built on them: data leakage, brand damage, jailbroken outputs. They mitigated some of that.

The trust model inverted. We now connect entire work machines to Claude Code, Cowork, and Cursor for autonomous execution. Agents are insiders with massive access. The security tooling hasn’t followed.

Classifiers watch prompts and model output. But what happens when they miss? A firewall bypass is easy to achieve. Once it’s bypassed, how would you know what happened next?

Classifiers can’t answer that question. Behavioral runtime monitoring can.

“First, a confession”

There’s a particular discomfort in writing a cautionary piece about one of the solutions you helped popularize.

We created LLM Guard, the open-source LLM firewall that became a standard for prompt injection detection. Over 12 million downloads. We founded Laiyer AI and took classifier-based security to market across Fortune 500 enterprises. Two acquisitions later (first by Protect AI, and ultimately by Palo Alto Networks), an entire ecosystem of AI firewalls now exists, built on the approach we helped pioneer.

We had an outsized hand in writing the first chapter of AI security.

So we earned the right to say what comes next.

When Guardrails Were Enough

So what are classifiers? A classifier, in this context, is a model that sits between the user and the LLM and scores text for risk.

Prompt goes in, the classifier checks it against known attack patterns, assigns a confidence score, and decides whether to block or pass it through. The same logic runs on the output side. That's the entire security surface these tools cover: text in, text out.

Early language models had few built-in defenses. Reinforcement Learning from Human Feedback (RLHF) introduced basic safety training (don’t explain how to build a weapon, don’t generate slurs), but the models themselves were wide open. A well-worded prompt could make them say anything. The problem was partly architectural: early deployments stuffed sensitive information directly into system prompts, so a successful jailbreak meant immediate data exposure.

The first concerns were concrete: sensitive data leaking through chatbot responses. Brand damage when someone manipulated a customer-facing bot into saying something embarrassing, screenshotted it, and posted it. Remember the Chevy dealership chatbot that was tricked into offering a $1 Tahoe? That was the threat model. Compliance teams watching a new technology they couldn’t control.

Classifiers addressed some of these. They scanned prompts and outputs for dangerous patterns, produced confidence scores, and gave security teams something to point at in an audit. The OWASP Top 10 for LLM Applications mapped the threat landscape. Frameworks emerged. Budgets followed.

The assumption underlying all of it: if the prompt looks clean, the system is safe.

For a while, that held. Deployments were small enough that a block-and-review approach was workable. When a missed prompt injection meant a bad screenshot or an embarrassing customer interaction, organizations could absorb the risk. The blast radius was reputational.

That world ended when agents arrived.

Coding agents don’t produce suggestions for humans to review. They read entire codebases, execute shell commands, access secrets, make commits, and deploy changes. The OWASP Agentic Top 10, published in late 2025, maps ten agent-specific risk categories that didn’t exist in the original LLM threat model. Most happen entirely outside the layer classifiers watch.

When a missed prompt injection means credential access, code exfiltration, or production infrastructure compromise, the blast radius isn’t reputational. It’s operational. As the Partnership on AI concluded in its 2025 framework on agent failure detection: human oversight alone cannot scale to monitor agent behavior in real time.

Agents Arrived. The Tooling Didn’t Follow.

Agents don’t just talk. They call tools, read filesystems, execute shell commands, talk to MCP servers, and make outbound network calls. A classifier watching prompts and model output is watching the least dangerous part of the system.

Adaptive Attackers Already Win

Classifiers try to tell safe prompts from dangerous ones. Attackers try to make dangerous prompts look safe. This is an arms race, and the defense is losing. As our own Ax Sharma reported on in CSO, the safety constraints themselves disproportionately burden defenders: security teams operate within the rules while attackers iterate freely around them.

When researchers from OpenAI, Anthropic, and Google DeepMind subjected 12 recent defenses to adaptive attacks, every defense fell. Attack success rates exceeded 90% across the board. The defenses had originally reported near-zero attack success. The gap between reported performance and adversarial performance is the gap between lab conditions and a motivated attacker.

Separately, researchers at Lancaster University tested character injection and adversarial ML evasion techniques against six production guardrail systems, including Azure Prompt Shield and Meta Prompt Guard. In some configurations, evasion reached 100%. The attacks maintained the adversarial payload’s functionality while becoming invisible to the classifier.

The defense must be right every time. The attacker only needs to be right once.

Blind to Language, Blind to Code, Blind to Context

Even when classifiers aren’t being actively evaded, they’re structurally blind in three ways that matter for agents.

Most classifiers are trained on English prompts. Multilingual support quality is variable, and in most cases it simply doesn’t work. An attacker who writes their injection in Mandarin, Arabic, or mixed-script text bypasses a classifier trained on English jailbreak patterns.

Most classifiers are built on BERT based models: compact language model architecture designed for text classification. Trained primarily on Wikipedia and web text, these models don't understand code. This makes them nearly useless for protecting coding agents, which is the exact deployment where the stakes are highest. A prompt injection embedded in a code comment, a shell command, or a pull request description looks like normal developer workflow to a text classifier that has never seen an agentic workflow.

And most classifiers scan individual prompts. Not the full conversation context. Not the tool-call chain. A multi-turn attack that looks benign at each step is invisible to a system that evaluates messages in isolation. An agent can pass through a clean-looking prompt and subsequently trigger tool invocations, file reads, and exfiltration that never touch the classifier again.

These aren’t edge cases. They’re the normal operating conditions for coding agents running on developer workstations.

Scaling Doesn’t Fix an Architectural Limit

The industry has poured billions into classifier-based AI security. That creates momentum. The natural response is to push the approach further: bigger models, more training data, tighter thresholds. But cost goes up, latency goes up, and accuracy still doesn’t hold against adaptive attacks.

Even Anthropic deploys Sonnet-class models, full frontier LLMs, as constitutional classifiers to secure interactions. That comes with real cost and latency. If the company that built Claude needs a frontier model just to classify prompts, the approach has a scaling problem.

Self-persuasion research exposes a deeper issue. Researchers demonstrated 84% jailbreak success by targeting the model's internal reasoning process, not the prompt itself. The model generates its own justifications for bypassing safety constraints. Researchers have described this as a new form of social engineering, one where the attacker persuades the model to persuade itself. You cannot train away a vulnerability that lives in the architecture of autoregressive text generation.

Bhattarai et al. formalized this in a 2026 paper: autoregressive language models process all tokens uniformly, making deterministic command-data separation unattainable through training alone. Probabilistic compliance is not security. It is an arms race with a structural ceiling, and the ceiling is mathematical.

The Attack Surface Classifiers Cannot See

Even a perfect classifier would miss the threats that cause the most damage, because those threats happen outside the prompt-to-response boundary entirely. MCP server supply chain attacks. Tool descriptor injection. Credential access through inherited permissions. Code exfiltration via authorized tool calls. None of these pass through a classifier.

MCPSecBench, the first systematic security benchmark for the Model Context Protocol, tested 17 attack types across four attack surfaces against Claude Desktop, OpenAI, and Cursor. Over 85% of identified attacks successfully compromised at least one platform. Current protection mechanisms achieved an average success rate of less than 30%. A separate benchmark, MSB, tested 12 attack types across nine LLM agents and found an overall average attack success rate of 40.71%. Out-of-Scope Parameter attacks, where agents blindly pass unreasonable parameters to tools, hit 74%.

The OWASP Agentic Top 10 maps the full landscape: agent goal hijacking, tool misuse, identity abuse, agentic supply chain vulnerabilities, unexpected code execution, memory poisoning, and more. The framework’s documented incidents include Amazon Q prompt poisoning that risked file wipes, multiple Cursor CVEs enabling remote code execution, the EchoLeak zero-click exploit against Microsoft 365 Copilot, and the Gemini Trifecta that chained indirect prompt injection across Google services.

Count how many of those pass through the inference boundary a classifier monitors. Almost none.

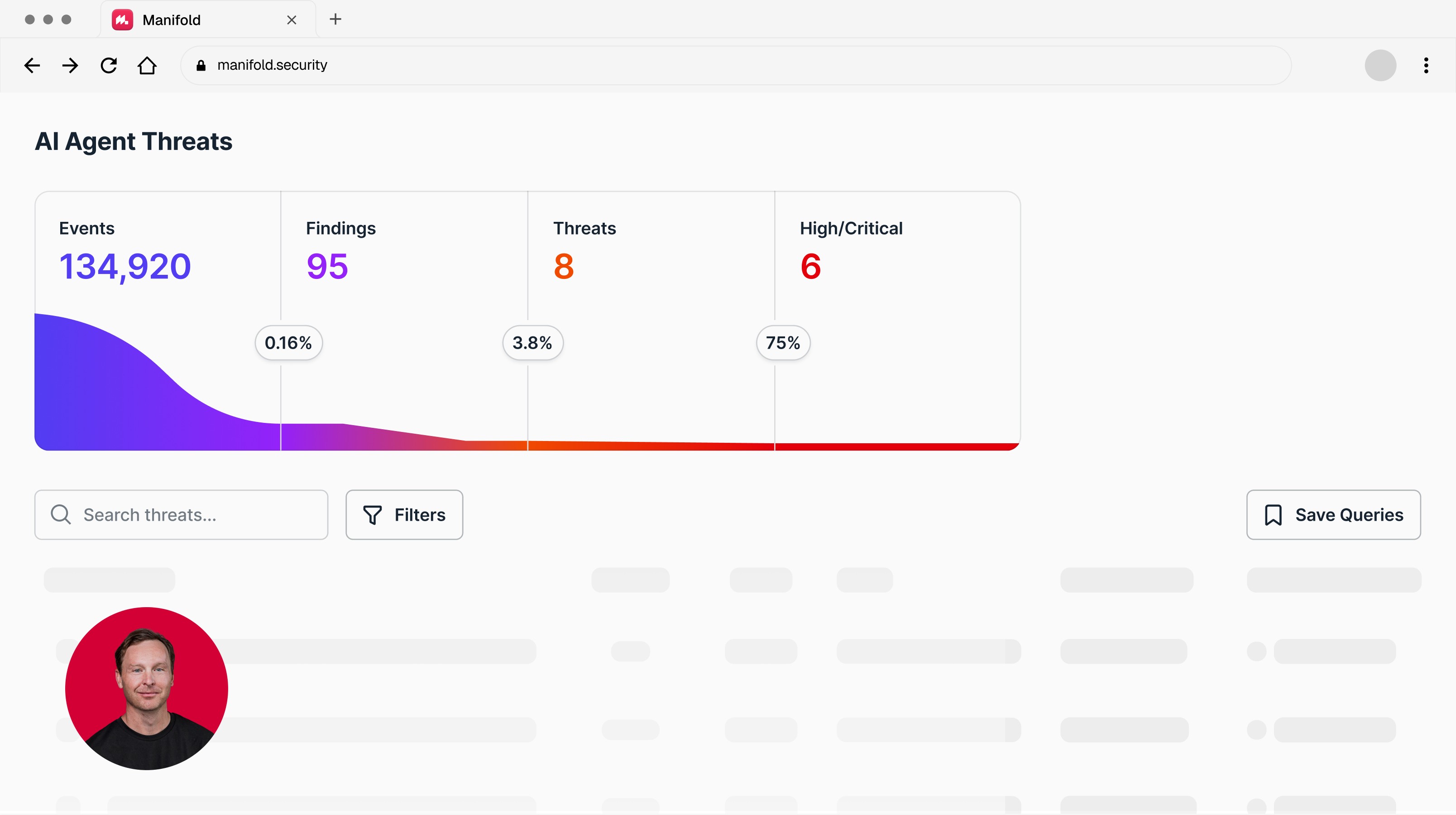

Want visibility into what your coding agents are actually doing at runtime? See Manifold in action.

What to do now?

Classifiers still have a job. Content filtering at inference catches toxic outputs, blocks obvious jailbreaks, satisfies compliance for chatbot deployments. Keep them.

But once an agent can invoke tools, access filesystems, and talk to external services, the security question shifts from what the model said to what the agent did. That requires different telemetry.

Consider a coding agent on a developer’s workstation. A classifier sees the prompt go in and the response come out. Behavioral monitoring sees everything else: the agent read 40 files in a credential directory, then made an outbound HTTP call to an IP it had never contacted before. No prompt was flagged. The behavioral signal is the only signal that exists.

Meta’s security team proposed the Agents Rule of Two: an agent should satisfy no more than two of three properties (untrusted inputs, sensitive system access, external state changes). Useful as a design-time constraint, but it carries the same tension as sandboxing. Too rigid and the agent stops being productive. Too permissive and the constraint doesn’t hold. Either way, you still need runtime visibility into what agents actually do.

For Security Teams:

Recalibrate what classifiers are for. They're a meaningful, but limited, content firewall placed only at one of several trust boundaries within an agentic system. Not an agent security strategy.

Implement behavioral runtime monitoring across the agent execution stack (i.e., actions within and across all agent trust boundaries). File access patterns, shell execution sequences, outbound network calls, tool invocation graphs, MCP server inventory drift. Baseline what normal looks like. Alert on deviation. A coding agent that reads source code, accesses environment variables, and then writes to an outbound API in the same session is a pattern worth investigating. No classifier produces this signal.

Define agent-specific incident response playbooks. When behavioral monitoring flags anomalous activity, the team needs a clear sequence: isolate the endpoint, terminate the agent process, and preserve the full tool-call log for investigation. An agent that just read 40 credential files and made an outbound HTTP call needs the same containment urgency as a compromised user account.

For Engineering / DevOps:

Instrument the full agent execution stack: tool invocations, file operations, shell executions, network calls. Treat MCP server inventory as a security artifact. If your observability stack doesn't capture these events, you have no visibility into what agents do after the prompt.

Limit agent capabilities to match organizational policy. Restrict which tools agents can call, scope file and network access to project boundaries, and require approval gates for high-risk operations like production deployments or credential access. The principle is least agency: never grant more autonomy than the task requires.

Want to see what your coding agents are actually doing, and the detection and response to secure your agentic operations? That’s what Manifold is built for. See it in action.

Latest articles