TL;DR

EDR/XDR tools assume the threat is an unauthorized entity. AI agents are authorized insiders. The detection model breaks on contact.

Coding agents have accumulated critical vulnerabilities enabling remote code execution and credential theft on developer endpoints. None of these attack chains produce signals EDR is designed to detect.

Developer workstations already carry extensive EDR exceptions because normal engineering workflows trigger false positives. Agents inherit and widen those blind spots.

EDR cannot cover agent-level behavior: tool invocation chains, context poisoning, MCP supply chain attacks, or cross-system blast radius. Closing that gap requires a fundamentally different detection paradigm.

The EDR Agent Security Gap: A Growing Pile of CVEs

Coding agents are accumulating critical vulnerabilities at an uncomfortable pace. In the last few months alone, Claude Code has been hit with a string of serious flaws: CVE-2025-59536 (CVSS 8.7) allowed remote code execution through malicious repository configuration files. CVE-2026-21852 enabled API key theft before a user could even confirm trust in a project directory. CVE-2025-54794 and CVE-2025-54795 bypassed path restrictions and enabled command injection via prompt crafting.

GitHub Copilot wasn’t spared. CVE-2025-53773 (CVSS 7.8) enabled remote code execution through prompt injection. An attacker could embed instructions in a README, source code comment, or GitHub issue that caused Copilot to modify its own configuration and enable unrestricted command execution. A proof-of-concept demonstrated a wormable AI virus that self-replicates through infected repositories.

Not one of these attack chains produces a signal that EDR was designed to detect.

How EDR Actually Works

Every EDR, XDR, and NDR platform on the market is built around a single principle: unauthorized access. There is a hostile world outside. Threat actors, malware, phishing campaigns. The entire detection model is designed to answer one question: has an unauthorized entity entered this system?

To answer it, EDR instruments the operating system. Process execution trees. File system events. Registry modifications. Memory artifacts. Network connections. Sensors sit at every layer of the OS surface, and detection rules are constructed to identify unauthorized presence and unauthorized movement. Signatures match known bad. Behavioral heuristics flag anomalous patterns. IOC matching catches indicators of compromise. The MITRE ATT&CK kill chain maps the expected progression: initial access, execution, persistence, privilege escalation, lateral movement, exfiltration.

Response is host-scoped. Isolate the endpoint. Quarantine the file. Kill the process. Block the indicator. The blast radius is assumed to be the machine.

The entire model depends on one assumption: the dangerous entity is unauthorized. That assumption is breaking.

Agents Are Authorized Insiders. EDR Wasn’t Built for That.

An AI agent is not an intruder. It is a trusted entity placed into the system on purpose. It authenticates with legitimate credentials. It operates within granted permissions. It accesses applications, APIs, data stores, the file system, and the network as part of its normal function. It is not just authorized. It is expected.

This inverts the question EDR was designed to answer. EDR asks: “should this entity be here?” For agents, the answer is always yes. The question that actually matters is different: “is this authorized entity acting in our interest?” EDR’s telemetry pipeline, rule engine, and response framework have no way to answer it. They were built to find things that shouldn’t be there, not to evaluate whether things that should be there are behaving correctly.

The OWASP Top 10 for Agentic Applications, released in December 2025, codifies this as a first-class risk category. Identity and Privilege Abuse (ASI03) describes agents operating with overly broad permissions that mirror human users, without the behavioral guardrails that would constrain a human. Goal Hijacking (ASI01) describes agents whose objectives are silently redirected through injected instructions. In both cases, the agent continues to operate within its authorized scope. The behavior is malicious. The credentials are clean. And the EDR dashboard stays green.

Agents Operate Above the OS

EDR monitors processes, file system changes, registry modifications, and network connections. Agent threats live somewhere else entirely: in the reasoning layer, the tool invocation chain, the context window, the MCP server connections.

Consider how some Claude Code vulnerabilities actually worked. CVE-2025-59536 exploits repository-controlled configuration files. A developer clones a project. The project’s .claude/settings.json contains malicious hooks. When Claude Code initializes, those hooks execute shell commands before the user sees a trust dialog. From EDR’s perspective, the developer’s coding tool read a configuration file and ran a command. That is what coding tools do. There is no anomalous process spawn, no suspicious file write, no unusual network connection. The attack is invisible at the OS layer because it is indistinguishable from normal operation.

The same pattern holds for Copilot’s CVE-2025-53773. The attack is delivered through a prompt injection embedded in a source code file. Copilot processes the instruction, modifies its own settings file to disable approval requirements, and gains unrestricted command execution. Every action in that chain looks like a developer tool doing developer things. EDR has no model for evaluating whether the tool’s behavior is aligned with the developer’s intent.

The attack surface has moved from the OS to the agent’s behavioral layer.

API Speed, Graph-Wide Blast Radius

The natural counterargument: insider threats aren’t new. We’ve always had to worry about authorized entities behaving badly. Privilege escalation, credential abuse, compromised employees. So isn’t agent security just insider threat management with some novel attack vectors?

No. The operational reality is qualitatively different.

A compromised employee exfiltrates data over weeks, accessing files one at a time. A compromised agent operates at API speed across every connected system simultaneously. Its legitimate scope of access is the blast radius from day one. Cross-system access is the default operating state, not something an attacker has to escalate to.

The manipulation vector is different too. Compromising a human insider requires social engineering that takes time and doesn’t scale. With agents, a prompt injection embedded in a document, email, or API response can compromise every agent that ingests it. One payload, thousands of agents.

EDR’s response model assumes the blast radius is one endpoint. Agent compromise is a graph traversal problem: every API call, every data source touched, every downstream action triggered. Isolating one machine doesn’t contain it.

Developer Workstations: EDR’s Longest-Running Blind Spot

Security teams have been granting EDR exceptions to developers for years, making developer workstations some of the hardest endpoints to protect. Engineers run compilers, debuggers, interpreters, build scripts, package managers, shell commands, and local servers daily. Every one of these activities looks suspicious to an EDR tuned for unauthorized access. So security teams grant exceptions: allowlisted paths, excluded processes, suppressed alerts. These accumulate. Teams bulk-allowlist critical processes to cut false positives, and in doing so, blind their EDR to threats hiding behind legitimate tooling.

Now those same endpoints run autonomous coding agents with access patterns broader than the developers themselves: reading entire codebases, executing shell commands, calling external APIs, connecting to MCP servers, deploying changes. All within the exception windows that already exist. EDR either stays silent or alerts on everything and gets turned down further. Neither outcome produces security value.

And this is just the current wave. Claude Code, Cursor, Copilot, and Windsurf are developer tools. Claude Cowork, OpenClaw, and others signal the next phase: autonomous agents for every knowledge worker. The same blind spot is about to reach every desk in the company.

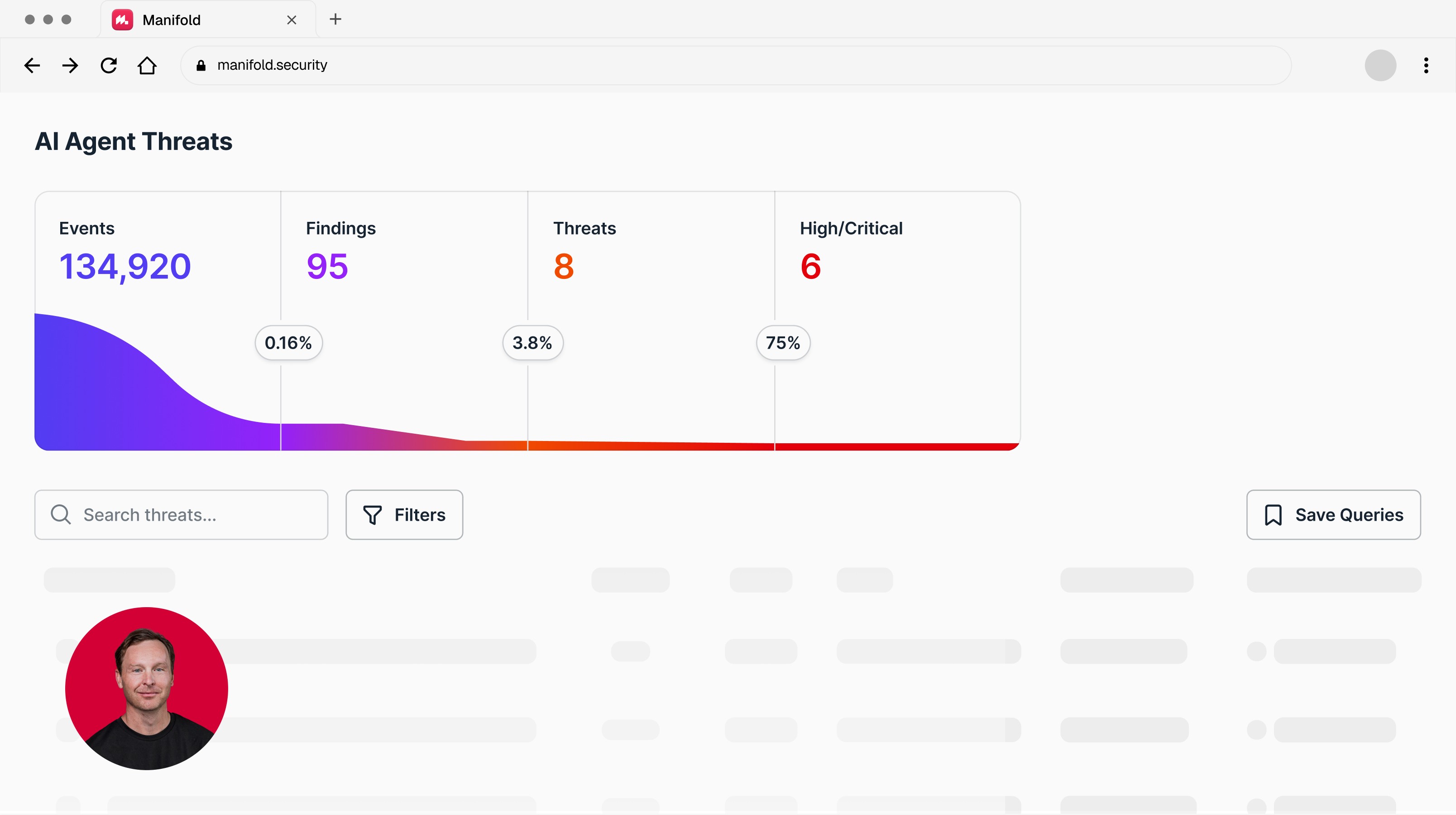

Want visibility into what your coding agents are actually doing at runtime? Talk to Manifold.

From EDR to AIDR: Detection Built for Agent Behavior

EDR is not obsolete. Malware, lateral movement, unauthorized access, credential theft from external actors: EDR still detects these threats and organizations should keep deploying it. But EDR monitors the operating system and asks: is something here that shouldn’t be? Agent-native detection monitors behavior and asks: is this authorized entity acting in your interest? These are fundamentally different questions requiring different telemetry, different detection logic, and different response capabilities. The threat model has expanded, and EDR’s architectural assumptions don’t stretch to cover it.

The response falls on two teams.

For Security Teams

Audit your EDR exception lists on developer endpoints. Know exactly what is excluded, why, and whether those exclusions now cover agent behavior that should be monitored.

Inventory every coding agent deployed across your organization. Claude Code, Cursor, Copilot, Windsurf, Codex. Include unsanctioned installations. You cannot secure what you cannot see.

Map agent access: what systems, APIs, data stores, and MCP servers does each agent connect to? The agent’s permission scope is the blast radius of a compromise.

Evaluate runtime behavioral monitoring for the agent layer. The detection signals that matter are different from OS telemetry: tool invocation sequences, context provenance, trust boundary crossings, session integrity.

Integrate agent-layer detection with your existing EDR/SIEM/SOAR stack. The two layers are complementary, not competitive.

For Engineering Teams

Pin coding agent versions. Auto-updates to agent tools without security review introduce uncontrolled changes to your endpoint attack surface.

Audit MCP server connections. Know which external tools and data sources your agents connect to. Treat MCP servers as third-party code with supply chain risk.

Restrict agent permissions to the minimum required for each task. The default is too broad. An agent that needs to read three files should not have access to the entire file system.

Never trust project-level configuration files from untrusted repositories without review. The CVEs above prove that configuration files are now an execution layer.

Want to see what your coding agents are actually doing, and the detection and response to secure your agentic operations? That’s what Manifold is built for. Talk to Manifold.

Latest articles