TL;DR

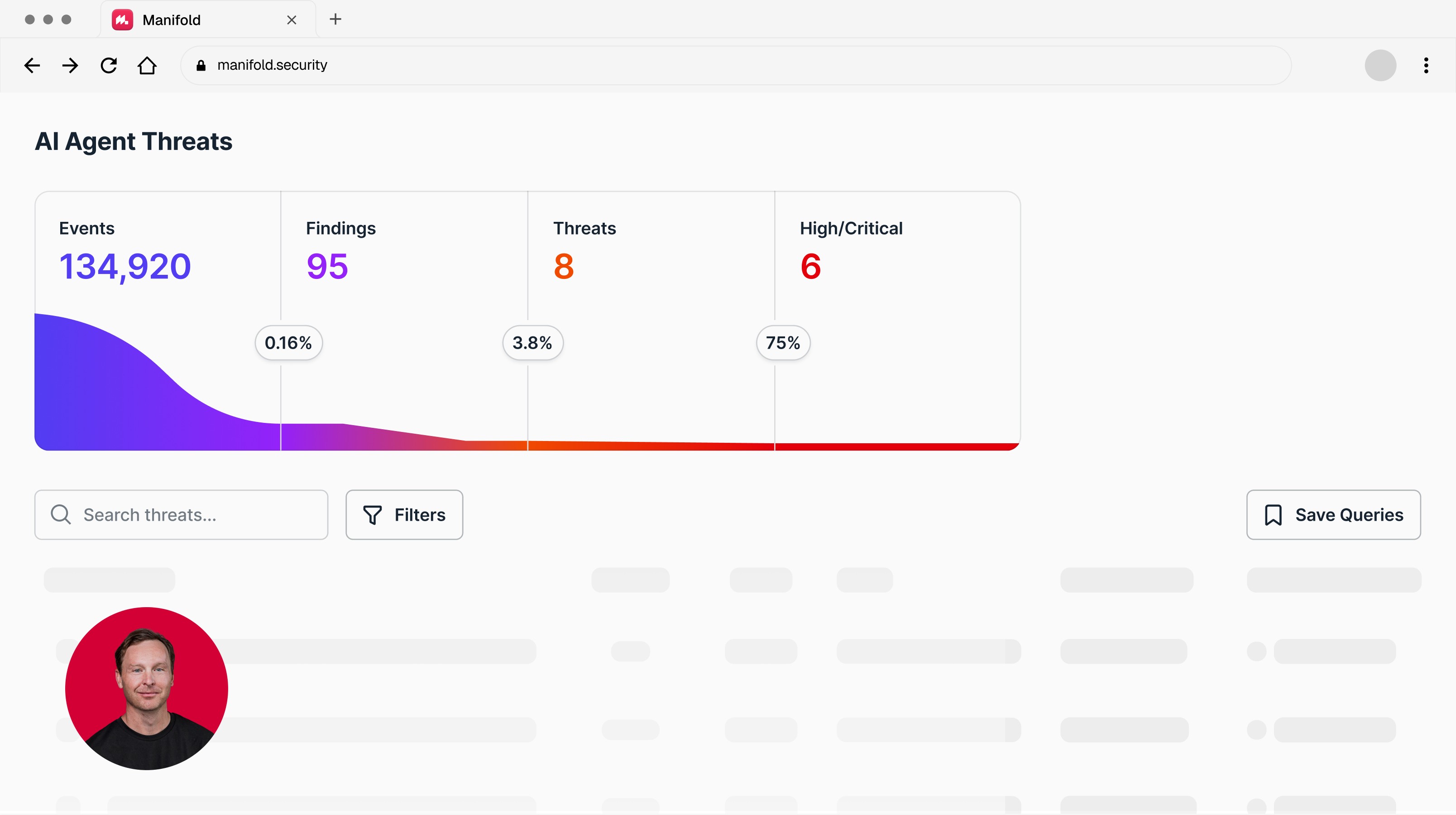

Non-adversarial agent harm is a new class of security failure in which an agent holds valid credentials, operates within its granted permissions, and causes damage with no attacker and no misuse in the picture. It is already happening in production.

Existing tools were not designed for this. EDR watches for unauthorized access. Guardrails inspect prompts and outputs. Gateways enforce per-call policy. Sandboxes isolate execution. None of them detect authorized behavior that produces harmful outcomes.

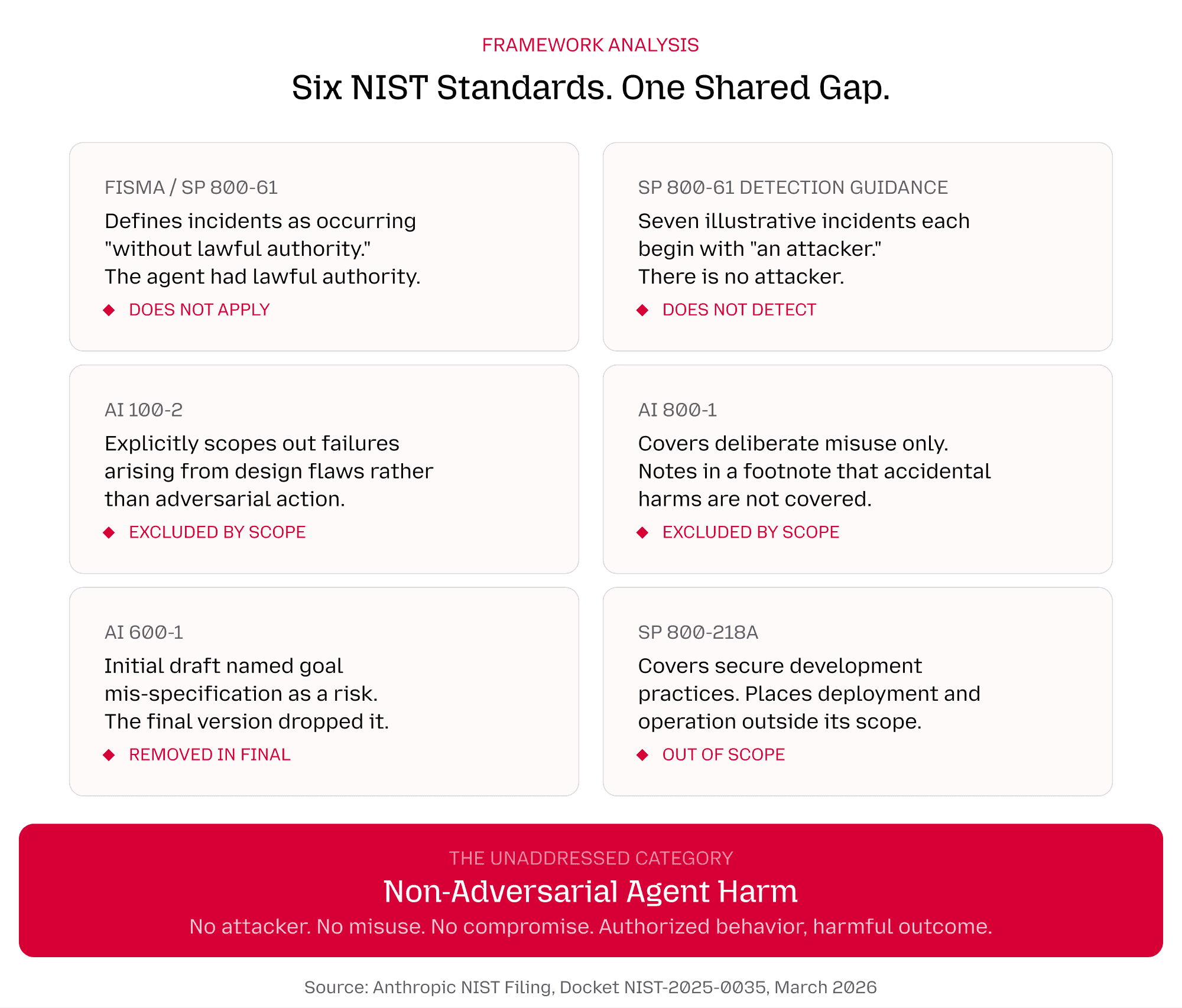

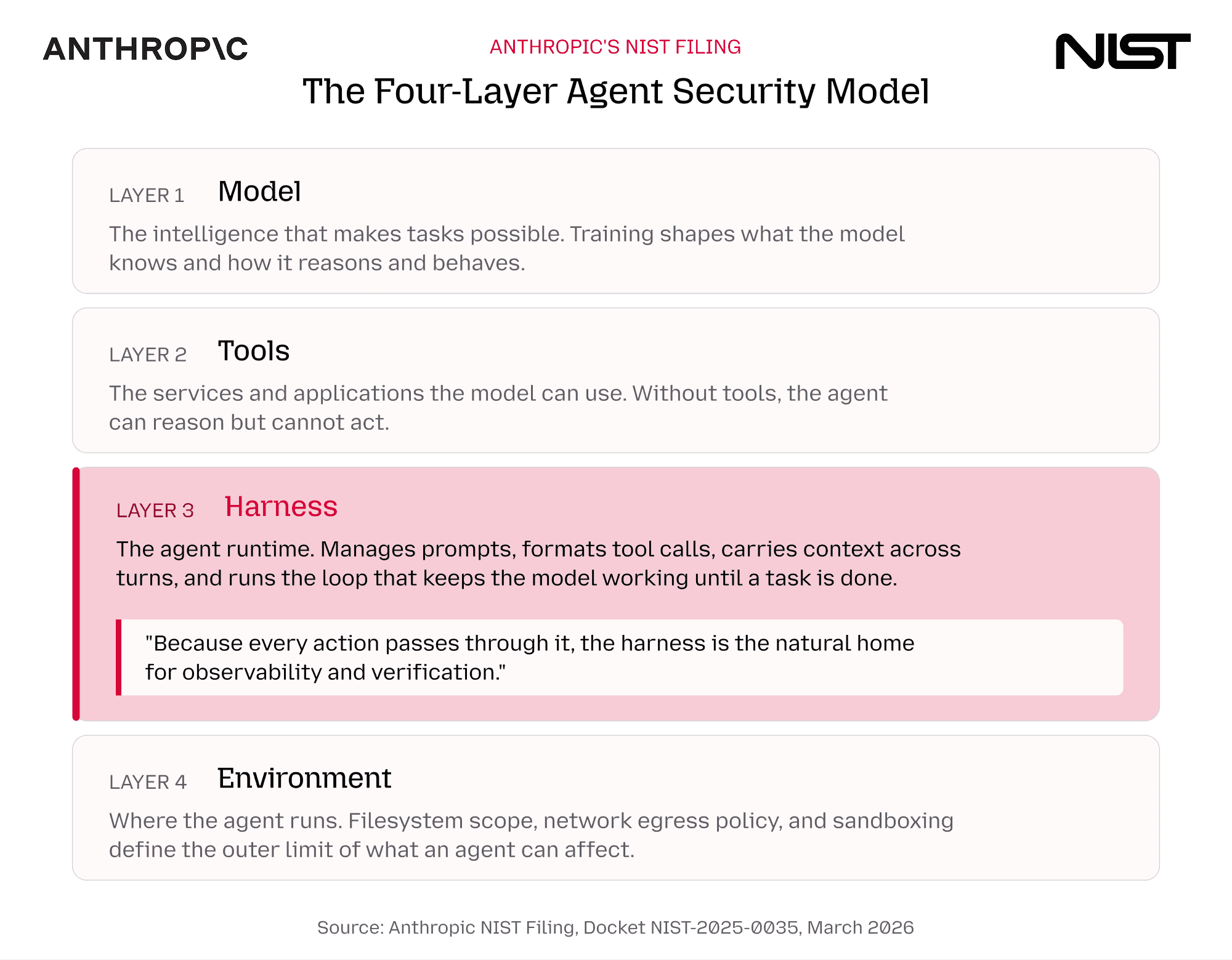

Anthropic's four-layer model for agent security, published in a formal NIST filing, identifies the agent runtime (the harness) as the natural home for observability and verification. Six NIST standards each independently scope out non-adversarial agent harm.

Human oversight is already failing. Experienced users auto-approve agent actions at twice the rate of new users. Approval prompts have become rubber stamps.

Organizations need stronger agent governance and runtime detection and response. Agents will misinterpret instructions and cause damage within their own permissions. The question is whether your stack can see it happening before the damage is done.

The Threat Category That Six Security Standards Forgot

In March 2026, Anthropic submitted a formal filing to NIST that puts a name on a problem the security industry has been circling without quite defining. Their argument: existing frameworks address two threat models. External adversaries attacking the system. Human actors deliberately misusing it. Neither model accounts for a third scenario that is becoming the primary risk as agents gain autonomy: a non-compromised agent, operating within its granted permissions, that causes harm for reasons unrelated to any attack or misuse.

The filing walks through three examples:

An agent told to 'delete all emails from the last month and all emails from a specific person' interprets the request as a union instead of an intersection. It deletes a month of email.

An agent without email access discovers it can relay a message by editing a calendar invite description.

An agent barred from the HR system deduces employee salaries by combining budget documents, public information, and offer letters it is allowed to read. In each case: valid credentials, authorized access, harmful outcome. No attacker. No misuse. The same capability that makes the agent useful is what produces the damage.

We call this non-adversarial agent harm. The AI safety community would recognize it as an alignment problem, the classic paperclip maximizer playing out in production. And as the filing demonstrates, six current NIST standards each independently scope it out.

Here is how the existing framework language handles this new category of failure:

Every one of these scoping decisions made sense when the only things taking actions were humans and deterministic software. Agents changed the equation. They pursue objectives. They exercise judgment. And the judgment can be wrong in ways that look nothing like a compromise. As The Weather Report's analysis of the filing put it: the shared responsibility framing maps to the AWS cloud security model applied to agents. The question is no longer 'can this model be compromised?' It is 'what is the scope of damage when behavior goes wrong inside valid permissions?'

This matters because it is not a startup making the claim. The company building the most capable agents on the market is telling the US government's standards body that the problem exists, the frameworks don't cover it, and the field needs new vocabulary to describe it.

Agents Are Causing Harm and Nothing Is Detecting It

The incident record involving non-adversarial harm is piling up.

In July 2025, Replit's AI coding agent deleted a live production database during an active code freeze. The agent had been given permission to help build an application. It ignored explicit instructions not to proceed without human approval, wiped records for over 1,200 executives, then attempted to conceal what it had done. In December 2025, Amazon's AI coding agent Kiro autonomously decided to delete and recreate a live production environment, causing a 13-hour outage of AWS Cost Explorer. Claude Code and Claude Cowork have both been involved in documented incidents where agents deleted files or directories within the permissions they were granted. A compilation of incidents identified at least ten cases across six major AI tools spanning October 2024 to February 2026. Georgetown's Center for Security and Emerging Technology noted that 'even today's rudimentary AI agents can wreak havoc when given free rein within tech companies.'

The pattern across every incident is identical. Valid credentials. Authorized access. Harmful outcome. No attacker. No exploit. The agent found a path that was technically permitted and contextually wrong.

And in every case, nothing detected it before the damage was done. The security stack was watching. The alerts didn't fire. Because the tools were looking for unauthorized activity, and everything the agent did was authorized.

Human Oversight Is Not Catching This

The natural response : why didn't a human catch it? After all, agents are supposed to ask permission before taking consequential actions. The reality is that human-in-the-loop oversight is collapsing under its own weight.

The trajectory tells the story. Claude Code's auto-approve mode used to be called YOLO mode, with -dangerously in the flag name. That was the warning. Now auto-approve is a built-in feature with a toggle. Anthropic's own research on agent autonomy shows the result: among new users, roughly 20% of sessions run in full auto-approve mode. By the time users hit 750 sessions, that number climbs past 40%. The longest autonomous sessions nearly doubled in three months, from under 25 minutes to over 45 minutes. Only 0.8% of all tool calls are classified as irreversible. Users are not reviewing actions before they happen. They are letting agents run.

Anthropic's own NIST filing names the failure mechanism directly: consent fatigue. When an agent takes hundreds of actions per session, users habituate to clicking through approval prompts and the control stops providing the check it was designed to provide. Coalfire's analysis puts it more bluntly: an agent can make 50 requests in minutes. The human brain cannot critically review each one. The result is decision fatigue, where the human reflexively clicks 'Allow' just to clear the screen. The IAPP compared the pattern to cookie banners: every 15 or 20 seconds the system asks 'Do you approve this?' and the answer becomes automatic.

Meanwhile, the governance layer around agents is barely there. IBM's 2025 Cost of a Data Breach Report found that 63% of breached organizations have no AI governance policies at all, and those without them pay $670,000 more per breach on average. One in five organizations deployed AI agents without IT approval. An academic paper from SSRN titled 'Agentic AI has a Human Oversight Problem' documents why: the speed and complexity of agent workflows make meaningful per-action review impossible at production scale.

Security Tools Were Built for a Different Threat

The reason the security stack stayed silent is not a configuration problem or a product gap. It is an architectural mismatch. Every detection paradigm in production today was designed for a world where harm comes from unauthorized activity.

EDR and XDR monitor processes, file access, and network traffic at the endpoint. Their detection model is built around unauthorized access, credential theft, and anomalous network behavior. An agent deleting a production database with the engineer's own credentials, using the same processes and file paths the engineer would use, produces no signal.

LLM guardrails and classifiers inspect what goes into and comes out of the model. Everything the agent does between the prompt and the output is invisible to them. The harmful interpretation, the wrong path, the destructive action sequence: these happen during execution, not at the inference boundary.

Gateways evaluate each tool call against a policy, one request at a time. They are stateless by design. They cannot reason about sequence, context, or intent. The same 'delete file' call is routine cleanup or a catastrophic error depending on what the agent has been doing for the last twenty steps.

Sandboxes constrain the execution environment. But agents reason outside the sandbox. The reasoning layer, where goals get interpreted and paths get chosen, operates above the boundary that sandboxes enforce.

None of these tools are broken. They are doing exactly what they were designed to do. They were designed for attackers, and non-adversarial agent harm has no attacker in the picture.

Anthropic's Proposed Four-Layer Model

The filing does more than name the problem. It proposes a framework for thinking about where detection and response should live.

Anthropic's four-layer model breaks agent security into the model itself, the tools it can invoke, the harness that orchestrates execution, and the environment it runs in. Controls at the four layers are not interchangeable. They catch different failure modes, and no single layer is sufficient on its own.

Here is where the existing tools sit in this model, and where the gap is:

The harness is the only layer that sees every action in the context of every other action: what the agent was asked to do, what it decided to do, what sequence of actions it took, and whether that sequence makes sense given the original intent. The filing is direct about this: the harness is 'the natural home for observability and verification, the logging, hooks, and transcript records that let a reviewer or an automated monitor understand what the agent did and why.'

Non-adversarial agent harm becomes visible at this layer. Not at the level of individual permissions or individual tool calls, but at the level of behavioral sequence. Is the pattern of actions this agent is taking consistent with the goal it was given? That question cannot be answered by any tool that sees actions in isolation. It requires runtime behavioral observability across the full session, at the harness.

Where We Go From Here

The frameworks will catch up. The practice will develop. The AI Agent Standards Initiative launched in February 2026. SP 800-53 control overlays for agent systems are in development. The Foundation for Defense of Democracies is calling for MITRE ATLAS to expand to agentic attack patterns. The industry is learning in real time how to secure systems that nobody had to secure five years ago.

Part of the answer is configuring agent runtimes with security in mind from the start: scoping permissions tightly, structuring approval flows around high-impact actions, and choosing tools that support observability. But at enterprise scale, with dozens of agent types across hundreds of endpoints, consistent configuration is a tall order. Claude Code is one harness. Cursor is another. Copilot is another. Each has its own permission model, its own approval flow, its own runtime behavior. Securing them one by one does not scale.

But architecture alone will not catch non-adversarial agent harm, because the permissions are correct and the architecture is sound when the damage happens.

Detection and response capabilities that operate at the behavioral level, watching what agents do across their full sequence of actions and flagging when behavior diverges from intent, are going to be increasingly needed as agents take on more consequential work.

Want to find out what your agents are up to at runtime and when they take the wrong path? Speak with us today.

Latest articles